K. I. S. S.

This is something I keep coming back to. Whether it’s talking about The Unix Way or the difference between complicated and complex, we seem to like complexity.

Developers are drawn to complexity like moths to a flame, often with the same outcome

– Neal Ford

It’s not surprising. It’s in the nature of the problems we’re trying to solve. We’re often dealing with large problems in real-world situations. People are involved, and people are complex. While they may be somewhat predictable in an aggregate sense, even Hari Seldon’s psychohistory didn’t (and couldn’t) predict the Mule. Or as Ian Malcom said, Life finds a way. Complexity is all around us, and we often feel like the only way to tame it is to build something even more complex.

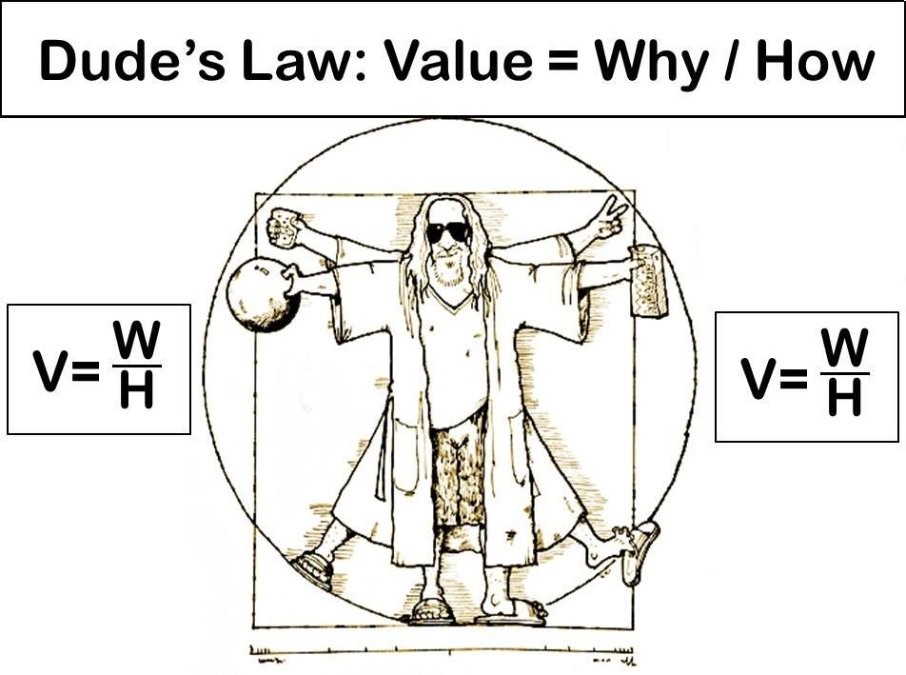

But what it it’s not? What if the best way to deal with complexity is to make things simpler? To build things that are in and of themselves very simple. They do one thing and do it well. The do it in response to an input. You can reason about them. You can make predictions about them. They don’t surprise you. And when the fail, they fail in very specific, predicable, handleable ways. Which means if it does something unexpected, it’s easy to figure out why. Then you can figure out how keep it from doing that. And make it even more predictable.

If you build something that way, something that has very narrow inputs and only a few outputs, the space it operates in, its operational domain, is very small. The smaller the operational domain, the less complex the behavior. And if one part is less complex, the rest of the system can be less complex. The more things you can make less complex the more you can make other parts less complex. It’s a virtuous cycle.

I can hear you saying that making things less complex is great and all, but the problems we’re looking at are complex, and we need to deal with that complexity. That’s true. Things are complex. We need to deal with them. The thing is, we need to deal with them at the system level, not the component level. We all want to build the big, new, shiny, complex, solution to all the world’s problems in one fell swoop, but that’s unlikely to be the best answer.

You can combine all those simple components in complicated ways. Consider the computer I’m using to create this entry. At its core, it’s a collection of gates. 1’s and 0’s. There are lots of them, and they’re connected in very complicated ways. And I can do almost anything with them. But complication is something we can deal with. Complicated things are knowable. And that’s the key.

We can almost always handle complex behavior with a complicated arrangement of simple, straightforward steps. It’s only for the rest of the cases that you need to build a complex solution.

So is your complex problem one that can be solved with a complicated arrangement of simple things, or do you need a complex solution. The answer to that question is “It Depends”.