Error Types

Statistics is all about the null hypothesis. You assume it’s true and try to prove if it is false. Consider a fire alarm. If it’s not ringing you assume there’s no fire. If it is ringing then the assumption is that there is a fire. The state of the alarm is a a simple binary. It’s either ringing or it’s not. And either there is a fire or there’s not. So you have the following truth table

Alarm

Ringing Not Ringing

|---------|-------------|

No Fire | Type I | CORRECT |

|---------|-------------|

Fire | CORRECT | Type II |

|---------|-------------|

Simple and clean. Two correct states and two error cases. The Type I, or false positive, and the Type II, or false negative.

As developers we need to deal with this kind of problem all the time. One of the more common is alerting, or error detection. If you have a perfect signal for an error case then you can always do the right thing. If your service is not running that’s an error. Simple. But what if you’re not getting the signal that your service is running? What type of error is that? Does that mean your service isn’t running or that the signal is blocked? What do you do in that case?

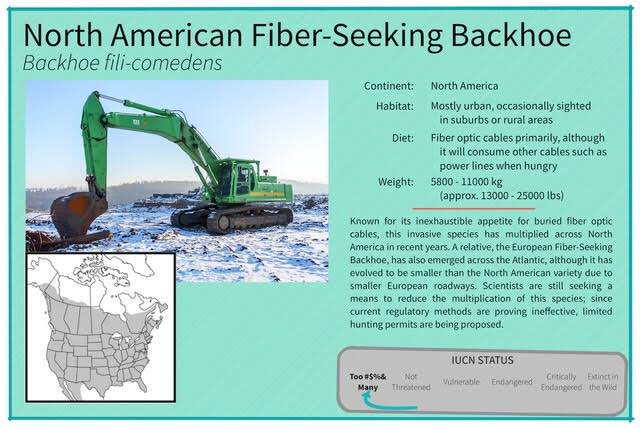

Well, it depends. Mostly it depends on the various costs of being wrong and benefits of being right. For monitoring the datacenter most of our alerts will fire if we don’t get the signal. It’s a Type I error and we do that for a few reasons. First, even if the DC is ok, the fact that we’re not getting a signal is a problem, and the cost of DC outages is high. Even if it’s not something in our control (i.e. the fiber seeking backhoe strikes again),

we still want to know so we can do something about it. Second, the actual cost of the alert is pretty low. Just a phone call, albeit potentially in the middle of the night. Actually, the cost for a single event is low.

The problem is that this is a distributed system. There are latencies. There are networks. There are many reasons why we might not get a datum on time, and if we fired the alert every time that happened we’d quickly succumb to alert fatigue and start ignoring them. That’s not an error, but it is a real problem.

So to avoid that we build some latency into the system, The signal needs to be bad for some time before we fire. The longer the time, the less likely we are to have a Type I error. Unfortunately, the longer the time, the more likely we are to have a Type II error, a false negative. And the cost of those is high. 100’s of people and thousands of tasks failing. We really don’t want that, so it’s a balance.

Your situation might be different. For mission critical safety decisions you might choose to eliminate Type II errors in favor of more Type I errors. It’s not comfortable, but a spurious hard braking event is better than no brakes and hitting something. Other things might be OK to just ignore.

And that completely skips the Type III (the right answer to the wrong problem) and Type IV (the right answer for the wrong reason) errors, but those are topics for another time